|

I am a fourth year PhD student in CS at the University of Michigan advised by Professor Andrew Owens and supported by an NSF GRFP fellowship. I currently study computer vision, and I have done research in deep reinforcement learning and representation learning in the past. I was lucky enough to have worked as an undergrad at UC Berkeley under Sergey Levine and Coline Devin, as well as Alyosha Efros and Taesung Park, and as an intern at FAIR under Lorenzo Torresani and Huiyu Wang. Github / Google Scholar / Twitter / Email |

|

|

I am currently interested in generative image models, how one can control them, and possible ways to use them. I've also worked on levarging differentiable models of motion for image/video synthesis and understanding, and I've worked on representation learning and multimodal learning. |

|

Daniel Geng, Inbum Park, Andrew Owens In Submission Sister project of "Visual Anagrams." Another zero-shot method for making more types of optical illusions with diffusion models, with connections to spatial and composition control of diffusion models, and inverse problems. arXiv / webpage / code |

|

|

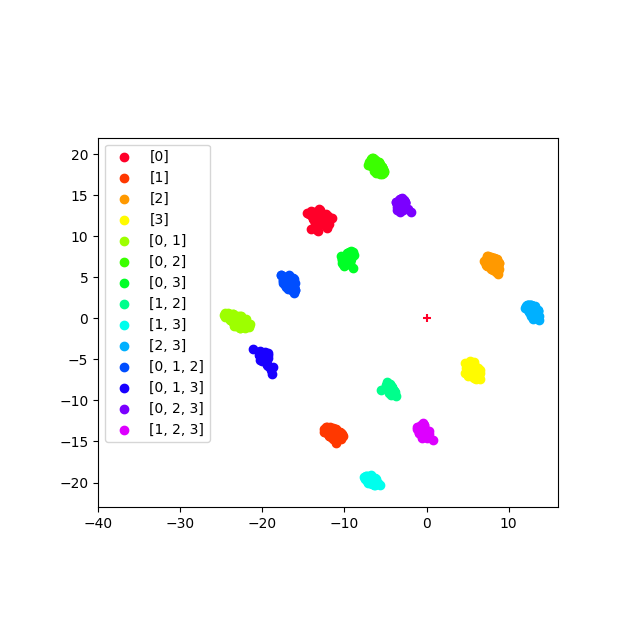

Daniel Geng, Inbum Park, Andrew Owens CVPR, 2024 (Oral) A simple, zero-shot method to synthesize optical illusions from diffusion models. We introduce Visual Anagrams—images that change appearance under a permutation of pixels. arXiv / webpage / code / colab |

|

Daniel Geng, Andrew Owens ICLR, 2024 We achieve diffusion guidance through off-the-shelf optical flow networks. This enables zero-shot motion based image editing. arXiv / webpage / code |

|

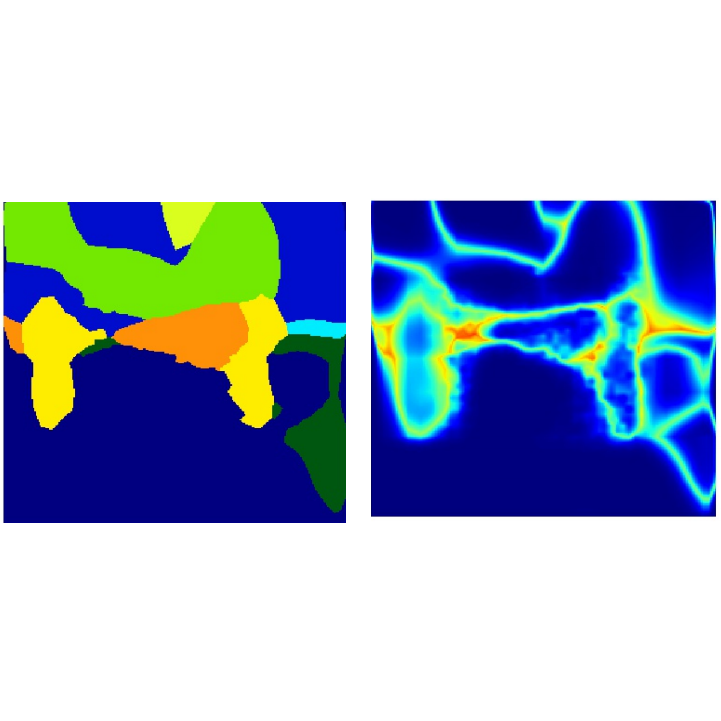

Daniel Geng*, Zhaoying Pan*, Andrew Owens NeurIPS, 2023 By differentiating through off-the-shelf optical flow networks we can train motion magnification models in a fully self-supervised manner. arXiv / webpage / code |

|

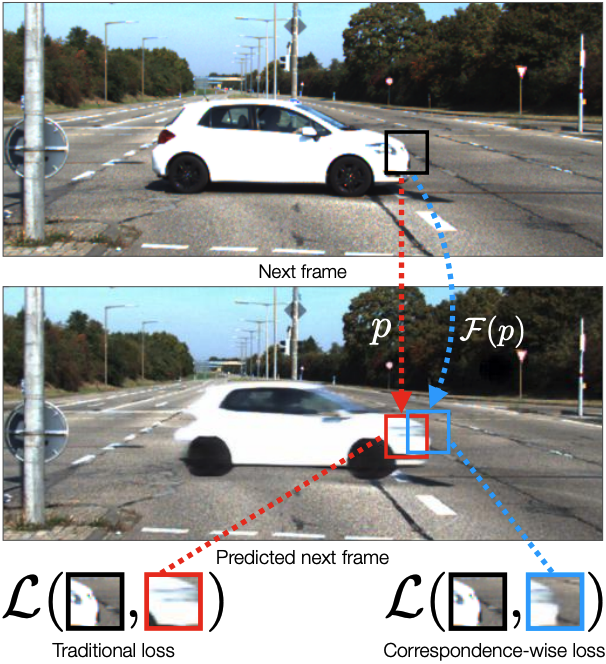

Daniel Geng, Max Hamilton, Andrew Owens CVPR, 2022 Pixelwise losses compare pixels by absolute location. Instead, comparing pixels to their semantic correspondences surprisingly yields better results. arXiv / webpage / code |

|

Glen Berseth, Daniel Geng, Coline Devin, Nicholas Rhinehart, Chelsea Finn, Dinesh Jayaraman, Sergey Levine ICLR, 2021 (Oral) Life seeks order. If we reward an agent for stability do we also get interesting emergent behavior? arXiv / webpage / oral |

|

Coline Devin, Daniel Geng, Trevor Darrell, Pieter Abbeel, Sergey Levine NeurIPS, 2019 Learning a composable representation of tasks aids in long-horizon generalization of a goal-conditioned policy. arXiv / webpage / short video / code |

|

Sayna Ebrahimi, Daniel Geng, Trevor Darrell Given any classification architecture, we can augment it with a confidence network that outputs calibrated class probabilities. |

|

Website template from Jon Barron.

|